Is Your Alert Security System Reliable? Expert Advice

Alert security systems form the backbone of modern cybersecurity infrastructure, yet many organizations struggle to determine whether their implementations truly protect against evolving threats. The reliability of an alert security system depends on multiple interconnected factors: detection accuracy, response speed, integration capabilities, and continuous threat intelligence updates. In today’s threat landscape, where attackers operate at machine speed and exploit zero-day vulnerabilities within hours, having a reliable alert system isn’t optional—it’s essential for survival.

Organizations face a critical challenge: security alerts have become increasingly noisy and difficult to manage. According to recent threat intelligence reports, security teams receive thousands of alerts daily, yet many lack the context and prioritization needed to identify genuine threats. This creates alert fatigue, where analysts become desensitized to warnings and critical incidents slip through undetected. Understanding how to evaluate and strengthen your alert security infrastructure directly impacts your organization’s ability to detect and respond to cyber threats before they cause significant damage.

Understanding Alert Security System Fundamentals

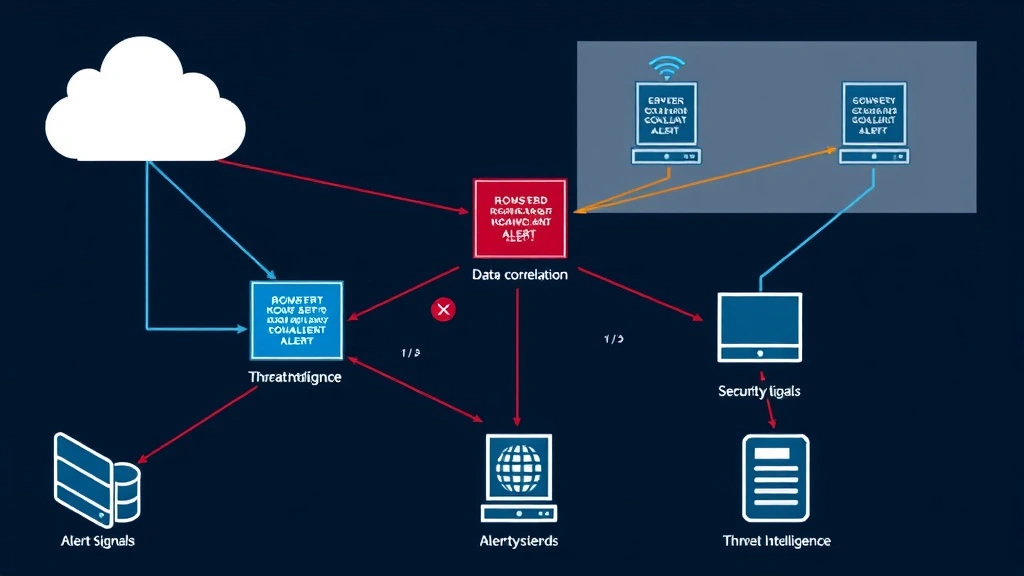

An alert security system operates as the first line of detection in your cybersecurity defense strategy. These systems monitor network traffic, system logs, user behavior, and application activity to identify suspicious patterns that deviate from established baselines. The core function involves collecting security events from multiple sources—firewalls, intrusion detection systems, antivirus software, endpoint detection and response platforms, and security information and event management (SIEM) solutions—then correlating this data to surface meaningful threats.

The reliability of your alert system begins with understanding its architecture. Modern alert security systems employ multiple detection methods: signature-based detection identifies known threats using predefined patterns, behavioral analysis detects anomalies through machine learning models, and heuristic analysis identifies suspicious characteristics even in unknown malware. Each approach has strengths and limitations. Signature-based detection provides high accuracy for known threats but misses zero-day exploits. Behavioral analysis catches novel attacks but generates false positives when legitimate users perform unusual activities. The most reliable systems combine all three approaches, creating layered detection that compensates for individual weaknesses.

Your organization should implement a comprehensive monitoring framework that covers all critical assets and network segments. This includes visible monitoring of internet-facing systems, internal network traffic analysis, cloud infrastructure monitoring, and endpoint protection across all devices. Many organizations discover gaps when they realize certain systems lack proper instrumentation, creating blind spots where attackers operate undetected. Establishing complete visibility across your infrastructure is the foundation for reliable alert generation.

Key Reliability Indicators for Alert Systems

Evaluating alert system reliability requires examining specific performance metrics and indicators. Detection rate measures the percentage of actual threats your system identifies. A detection rate below 80% indicates significant blind spots, while rates above 95% suggest more comprehensive threat identification. However, detection rate alone doesn’t determine reliability—false positive rates matter equally. If your system generates alerts for benign activities, analysts waste time investigating non-threats, leading to alert fatigue and missed genuine incidents.

The mean time to detection (MTTD) represents how quickly your alert system identifies threats after they occur. In ransomware attacks, the difference between detection within minutes versus hours determines whether you can isolate infected systems before data encryption spreads. Organizations with MTTD under 30 minutes demonstrate significantly better threat containment than those requiring days to detect compromise. This metric directly correlates with damage minimization and recovery costs.

Mean time to response (MTTR) measures how quickly your team acts on alerts. A reliable alert system generates actionable intelligence that enables rapid response. If alerts lack context—such as affected systems, attack vectors, or recommended actions—response times increase dramatically. The most reliable alert systems provide sufficient detail that analysts can make containment decisions within minutes rather than requiring extended investigation.

Precision and recall represent critical statistical measures. Precision indicates what percentage of generated alerts represent actual threats (true positives divided by all alerts). Recall shows what percentage of actual threats your system detects (true positives divided by all actual threats). Balancing these metrics proves challenging: increasing detection sensitivity improves recall but decreases precision by generating more false positives. Reliable alert systems maintain precision above 70% while achieving recall exceeding 85%, indicating they catch most threats without overwhelming analysts with false alarms.

Common Failure Points and Detection Gaps

Alert security systems fail for predictable reasons that organizations can address through systematic evaluation. Configuration drift occurs when alert rules become outdated as your infrastructure evolves. Legacy detection rules designed for old operating systems or applications may not apply to modern systems, creating unmonitored gaps. Regular audits of alert rule configurations against your current environment prevent this common failure mode.

Insufficient data sources represent another critical gap. Organizations monitoring only network perimeter traffic miss internal lateral movement, data exfiltration through encrypted channels, and cloud-based attacks. Comprehensive alert systems require integration with endpoint detection platforms, cloud security monitoring, and user and entity behavior analytics (UEBA) to detect threats across modern hybrid environments. If your alert system doesn’t monitor cloud infrastructure, you’re potentially blind to significant portions of your attack surface.

Alert tuning failures cause reliability problems in both directions. Over-tuned systems suppress legitimate alerts to reduce noise, inadvertently filtering out genuine threats. Under-tuned systems generate excessive false positives, causing analysts to ignore real warnings. The Cybersecurity and Infrastructure Security Agency (CISA) recommends iterative tuning processes where alert rules are regularly evaluated against actual security incidents to identify suppression errors.

Integration limitations prevent correlation of data across systems. An attacker might trigger individual alerts in your firewall, antivirus, and SIEM that appear benign in isolation but represent coordinated attack activity when correlated. Alert systems lacking proper integration capabilities miss these compound threats. Additionally, many organizations struggle with alert aggregation—duplicate alerts from multiple security tools create noise that obscures genuine threats.

Threat intelligence gaps mean your alert rules may not reflect current attack patterns. Alert systems using outdated threat intelligence miss emerging attack techniques. For example, if your alerts don’t account for living-off-the-land attacks that abuse legitimate system tools, you’ll miss sophisticated threat actors who intentionally avoid deploying malware. Reliable alert systems receive continuous threat intelligence updates from reputable sources that track evolving attack methods.

Alert Tuning and Optimization Strategies

Improving alert reliability begins with systematic tuning based on your specific environment and threat landscape. Start by establishing baseline alert behavior—run your alert system for 2-4 weeks and collect all generated alerts without suppression. Analyze which alerts represent genuine security incidents versus false positives. This baseline reveals your system’s actual performance and identifies which alert rules require adjustment.

Implement risk-based alert prioritization that considers asset criticality, threat severity, and contextual factors. Not all alerts warrant equal attention. Threats targeting critical infrastructure demand immediate investigation, while suspicious activity on non-critical development systems can be investigated during standard business hours. Reliable alert systems rank threats by potential business impact, enabling efficient resource allocation.

Use alert correlation rules that link related events into higher-confidence detections. Single events may appear benign, but sequences of events reveal attack patterns. For example, failed login attempts followed by successful access from an unusual location followed by sensitive data access represents a coordinated attack. Alert systems that correlate these events into a single high-priority incident enable faster threat identification than systems that generate three separate low-priority alerts.

Develop alert enrichment processes that add context to raw security events. Enrichment might identify whether an IP address is known malicious infrastructure, whether a user account belongs to a privileged user, or whether accessed data is classified as sensitive. Enriched alerts enable faster analyst decisions because they provide background information directly in the alert rather than requiring additional investigation.

Implement automated response capabilities for low-risk, high-confidence alerts. If your system detects malware with absolute certainty, automatically isolating the infected endpoint prevents spread while human analysts investigate. Automated response reduces MTTR for routine threats while freeing analysts for complex investigations. Start conservatively—only automate responses for alerts with extremely high precision—then expand as you build confidence in your alert system’s accuracy.

Integration with Threat Intelligence

Reliable alert systems require continuous integration with current threat intelligence. Threat intelligence feeds provide information about known malicious IP addresses, domains, file hashes, and attack patterns. Integrating these feeds into your alert system enables detection of known threats without requiring analysts to research each suspicious indicator. Organizations using threat intelligence report 40-60% improvements in threat detection speed compared to manual analysis approaches.

Implement intelligence feeds from multiple sources to gain comprehensive threat visibility. The MITRE ATT&CK framework provides structured descriptions of adversary tactics and techniques based on real-world observations. Mapping your alert rules to MITRE ATT&CK techniques ensures you detect threats across the full attack lifecycle, not just initial compromise or data exfiltration. This framework-based approach improves alert coverage systematically.

Subscribe to industry-specific threat intelligence relevant to your sector. Financial institutions face different threats than healthcare organizations or manufacturing companies. Threat intelligence tailored to your industry provides earlier warning of attacks targeting your specific sector. Additionally, participate in information sharing communities like Information Sharing and Analysis Centers (ISACs) where organizations in your sector share threat observations, enabling collective defense.

Establish processes for rapid alert rule updates when new threats emerge. If a critical vulnerability is disclosed with active exploitation, your alert system should detect exploitation attempts within hours of the vulnerability announcement. Organizations requiring days or weeks to update alert rules miss the critical early response window when breaches are most preventable.

Measuring Alert System Performance

Quantifying alert system reliability requires establishing clear metrics and measurement processes. Create a security incident database documenting all confirmed breaches, intrusions, and security incidents. For each incident, determine whether your alert system detected the threat, how long detection took, and what alert rules triggered. This historical analysis reveals which alert rules provide genuine value and which generate noise.

Calculate your system’s detection coverage percentage by dividing confirmed threats detected by your system by total confirmed threats. If you suffered 10 security incidents last year and your alert system detected 7 of them, your detection coverage is 70%—indicating room for improvement. Track this metric over time to measure whether your alert tuning efforts improve actual threat detection.

Measure false positive rates by tracking what percentage of alerts prove to be non-threats. High false positive rates (above 20%) indicate your alert rules require tuning. Additionally, track alert resolution time—how long analysts spend investigating each alert. Alerts requiring 30+ minutes of investigation consume significant resources. Improving alert quality through better enrichment and correlation reduces resolution time, enabling your team to investigate more threats with the same headcount.

Implement alert effectiveness scoring that rates each alert rule based on its performance. Rules that detect genuine threats with minimal false positives receive high scores, while rules generating excessive false positives receive low scores. Regularly review low-scoring rules for potential removal or modification. This data-driven approach prevents alert rule accumulation where organizations maintain hundreds of outdated rules that no longer reflect their environment.

Best Practices for Alert Management

Organizations implementing these best practices significantly improve their alert system reliability. First, establish clear alert ownership with assigned teams responsible for monitoring, tuning, and improving specific alert categories. Without clear ownership, alerts receive inconsistent attention and improvement efforts stall. Ownership creates accountability that drives continuous improvement.

Implement alert escalation procedures that ensure critical alerts receive appropriate attention. High-severity alerts should automatically escalate to senior analysts if not acknowledged within 15 minutes. Escalation procedures prevent critical threats from being overlooked during periods of high alert volume or analyst distraction.

Develop alert runbooks providing step-by-step investigation and response procedures for each alert type. Runbooks accelerate response by eliminating decision-making delays—analysts follow established procedures rather than determining appropriate responses to each situation. This consistency improves both speed and quality of threat response.

Create feedback loops where analysts investigating alerts provide information back to your security engineering team about alert quality. If analysts consistently find that a particular alert type lacks important context, engineers can enrich that alert rule. If analysts discover that alerts frequently indicate false positives, engineers can adjust detection thresholds. These feedback loops drive continuous improvement in alert quality.

Establish regular alert system audits (quarterly minimum) that comprehensively review all active alert rules, their performance metrics, and their alignment with current threats. Audits identify outdated rules, misconfigured thresholds, and gaps in detection coverage. Document audit findings and create remediation plans with assigned owners and completion deadlines.

Invest in analyst training and tools that enable your team to effectively manage alerts. Overwhelmed analysts with poor tools will miss threats regardless of how good your alert system is. Provide training on using your SIEM platform, threat intelligence tools, and investigation methodologies. Equip analysts with automation tools that handle routine tasks, freeing them for complex threat investigation.

Finally, foster a culture of continuous improvement where security teams regularly discuss alert system performance and collectively identify improvement opportunities. When analysts feel empowered to suggest alert improvements and see their suggestions implemented, they become invested in the system’s reliability. This cultural shift transforms alert management from a compliance obligation into a core security capability.

FAQ

What is the difference between an alert security system and a firewall?

A firewall controls which network traffic can enter or exit your network based on predefined rules, functioning as a preventive control. An alert security system monitors that traffic (and internal activity) to detect threats that bypass preventive controls, functioning as a detective control. Both are essential—firewalls prevent most attacks, while alert systems catch the attackers who circumvent firewall protections.

How often should I update my alert rules?

Alert rules should receive quarterly comprehensive reviews, with critical updates deployed within days of new threat intelligence. When a significant vulnerability emerges with active exploitation, update relevant alert rules immediately—within 24 hours if possible. Additionally, monitor your alert system’s performance continuously and adjust rules when metrics indicate problems.

Can I rely entirely on automated alerting without human analysts?

No. Automated alert systems generate false positives and miss sophisticated threats that require human judgment and contextual analysis. However, automation should handle routine, high-confidence detections to free analysts for complex investigations. The optimal approach combines automated detection and response for routine threats with human expertise for sophisticated threats.

What should I do if my alert system generates too many false positives?

High false positive rates indicate your alert rules require tuning. Analyze false positive patterns to identify common causes—perhaps the rule triggers on legitimate business activities. Adjust detection thresholds, add contextual conditions to rules, or implement alert correlation that suppresses duplicate alerts from the same incident. Consider whether some alerts should be investigated during designated times rather than triggering immediate notifications.

How do I know if my alert system detected a breach I didn’t notice?

Review your alert system’s historical logs for alerts indicating potential compromise that weren’t acted upon. Look for sequences of suspicious alerts that correlate with known attack patterns. Conduct regular threat hunting exercises where analysts proactively search for indicators of compromise. Additionally, engage external incident response firms to perform threat assessments and penetration testing that identifies gaps in your detection capabilities.

Is cloud-based alerting more reliable than on-premises systems?

Both approaches can be reliable when properly configured. Cloud-based alert systems offer advantages including automatic updates, scalability, and access to cloud-based threat intelligence. On-premises systems offer advantages including customization and control over sensitive data. Many organizations use hybrid approaches combining cloud and on-premises alerting to capture benefits of both approaches while maintaining flexibility.